3D Printer Arrives

I finished my last blog with a comment that I might consider getting a 3D printer. Consider I did and gave myself a Christmas present!

After some research I went for a QIDI X-MAX3. It has good reviews and is near the top end of home machines (Qidi describe it as commercial even). Cheaper machines are OK printing with PLA but this isn’t very durable outside. The X-MAX3 is capable of printing almost any material it seems. Its also big, with a printing volume of over 300mm cubed.

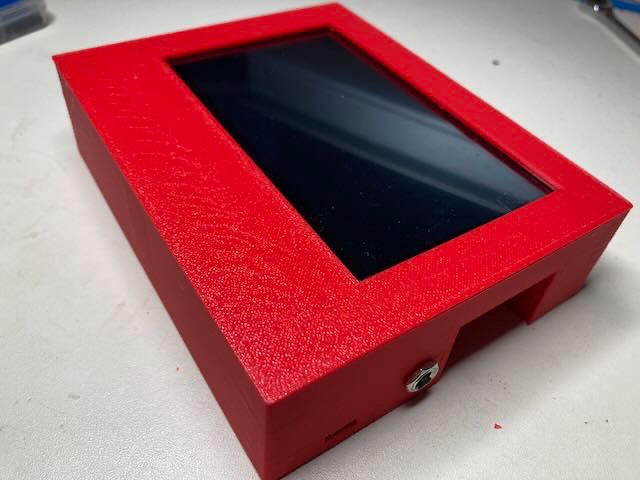

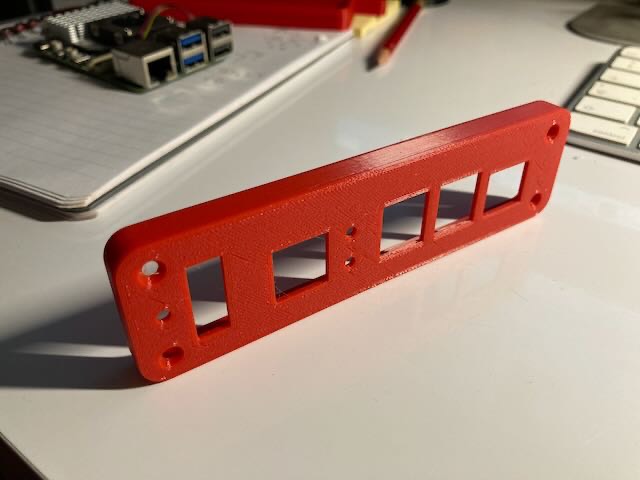

Now I have to learn how to use it (The manual isnt very detailed) and learn to design in 3D. First efforts are OK. The eFinder display box I described in my last blog cost £18 and 4 hours to modify. Now I have designed a printed version, each copy costs about a £1 and requires none of my own time.

New eFinder display ready

I couldn't find any plastic boxes the right size, so used a rather nice pre-painted aluminium alloy die-cast box. The position of the connectors on the PI are in a rather awkward place, so I had to cut a rebate in the back of the box,and then braze up a cover. Turned out OK, but it makes me think I need to get a 3D printer!

New eFinder display and the new Raspberry Pi5

I’ve always used and recommended AMOLED type displays for astronomy device displays. Not needing a backlight their contrast is very high and their black is absolutely black. Great for dark adaptation and very readable. I use a Samsung tab A2 tablet to run SkySafari while observing as it has an excellent AMOLED display panel.

Recently AMOLED display modules have become available and affordable for the diy market. A 5” display with 900x544 resolution and touch control from Waveshare looked interesting so I decided to build an eFinder with one of these displays ‘built in’.

This coincided with my receipt of one of the first Raspberry Pi5’s to be delivered in the UK. This new model looks very similar to the previous, but is very fast. Initial testing showed it would plate solve x3 quicker (ie about 0.6 seconds). It needs the new Bookworm OS and this I found requires a more structured approach to its set up (virtual environments) plus it had a few bugs!

The AMOLED display really needed a new eFinder GUI display to make the most of its potential. This turned out to be quite a rewrite, but I took the opportunity to make it more user friendly and slicker.

Photo to the right is the display connected to a Pi4 during evaluation.

Below is the finished new GUI running. The photo makes the star image background look brighter than it is to the eye. Next job is to put it in a box.

November at Haw Wood Farm

The Autumn star party at Haw Wood Farm almost had to be cancelled due to water logged ground. The forecast for the week didnt look great either. But the site managers Dan & Georgina pulled out all the stops and moved us to their gravel pitches, and asked others nearby to keep their lights down.

This worked well, and we amazingly had observing on 5 out of 7 nights, including some of the steadiest seeing I’ve seen in the UK.

My own ScopeDog drive (18” UC) with integrated eFinder worked brilliantly. A friends 15” Classic Obsession that I had installed ScopeDog with eFinder on during the summer also worked well, although the owner was still on the learning curve. A standalone eFinder I fitted to a friend’s 22” UC Obsession had its first use and on its first night bagged about 50 targets, including some very difficult to find ones.

The eFinder is now mature!

October 2023

Autumn Kelling Star Party wasn’t great weather wise. Only a couple of clear night but one was very transparent. Good to see everyone again though.

I spent most of the observing time helping some friends who have ScopeDogs and eFinders. I realise the instructions I wrote can assume too much inside knowledge!

Haw Wood Farm next month and I hope to get some ‘me' time observing!

No large changes to report on new code or hardware. Summer was busy with grandchildren and dealing with grey squirrels. The later really can be destructive pests.

About a dozen peple are currently building their own ScopeDog or eFinders, which is satisfying for me. Keeps me busy answering their questions.

Spring at last

Kelling Heath astro camp wasnt too bad weather wise.

More importantly the mk3 ScopeDog continued to perform OK. I tried out some new altitude drive rollers which had Shore70 hardness urethane sleeves. These worked incredibly well.

Summer break from observing now, so time to get busy in the workshop.

More new mathematics

Since my mk2 ScopeDog I had been calculating tracking rates using classic sphercial trigonometry. Quite straightforward and the method used in professional observatories. But their scope mounts are level!

Whilst the Nexus DSC takes care of positional accuracy with a tilted mount, and the digital finder measures absolute posiion for refinements, the calculated tracking rates in Az & Alt werent taking account of any tilt. This means the scope needs to be fairly well levelled when set up. Any tilt results in tracking slowly losing the target over a period of some minutes.

With the mk4 ScopeDog (no Nexus DSC) I really needed to do my own 2 star alignment to determine tilt and improve tracking. A couple of days thinking how to do it werent very fruitful - just a headache!

Serge at Astrodevices suggested a few ideas, and a little while later I found an excellent document written by Toshimi Taki that covers it - but it was all based on matrix operations. I’d forgotten completely how to do this - so a day of revision followed. Fortunately Python has a lot of built in (NumPy) matrix operation methods.

Having coded the technique and tried it on some test data - I’m impressed and keen to incorprate it into ScopeDog mk4, and possibly mk3.

mk4 ScopeDog - no encoders!

All these versions of ScopeDog are a way of marking significant developments in the design and functionality.

A recap of versions so far.

mk1 - almost 10 years old now. Built around now obsolete Phidget modules, built in GPS, Pi3 and code written in Java.

mk2 - First appeared in 2021. New Phidget modules allowed a re-packaging into a much smaller box. Pi4 running code in Java. Closer intregration with the Nexus DSC meant no GPS needed.

mk3 - 2022. Same basic hardware as mk2 but code now in Python. Digital finder functionality built in. New hand paddle with OLED display and digital finder controls. Vastly improved pointing accuracy and ease of initial alignment.

So why a mk4?

Based on experiences with the mk3, I could see the potential to remove the need for mount encoders completely. The digital finder can quickly determine absolute telescope position and the drive stepper motors can manage position inbetween solves.

With a week confined to a caravan at a rainy astro camp, the code rewrite made good progress. Currently I can just power up the scope, point it at an object, and 3 seconds later it is tracking. No alignment needed! I can then do a goto to a new target, which is initially accomplished using stepper motor step counts, but once that is done, a plate-solve automatically corrects any errors and puts the scope on target. Adds about 4 seconds to a goto.

Only about 10% of the core code needed rewriting, but the most difficult part was establishing a direct wifi link to SkySafari (previous this had been left to the Nexus DSC)

2023 Observing so far (Spoiler -its bad!)

Attended the Spring astro camp at Haw Wood Farm in Suffolk last month. Not the worst weather, but almost!

However the caravan I hire is cosy and I take most of my telescope drive development kit. With a lot of time to spare I made good progress on the mk4 ScopeDog code (more in next blog).

I made the right decision not to take the 18” Dobsonian, but instead my 100mm Miyauchi binoculars. These proved very suitable for quickly taking advantage of the few clear spells inbetween rain and wind.

mk3 ScopeDog update

After a lot more hours on the scope simulator, I’m happy the mk3 not only works OK, but is better than previous versions. The new handbox is a real pleasure to use - adding a text display and buttons to the scope drive opens up new possibilities. The OLED display is nice and can be user dimmed down to suit.

The simplicity of just plugging a camera straight into the scope drive box is great and having integrated the scope drive and finder software lots more features can be added.

In my view, this is the future for Dobsonian drives!

I’m now experimenting with a ‘ k4' - no DSC or encoders needed. It will just use the stepper motor count and finder solves to manage scope position.